|

Robotics Institute, Carnegie Mellon University I am currently a full-time Applied Scientist Intern at Frontier AI & Robotics (FAR), Amazon. My research focuses on 4D vision and its applications to robotics (humaniod locomotion & loco-manipulation). I earned my M.S. at the Robotics Institute, School of Computer Science, Carnegie Mellon University, I was extremely fortunate to be advised by Prof. Deva Ramanan. I was also fortunate to collaborate with Prof. Shubham Tulsiani. Prior to joining CMU, I previously earned my B.Eng. with Honours in Electrical and Electronics Engineering from The University of Edinburgh. At my undergraduate years, I had the fortune to work with Prof. Sebastian Scherer and Prof. Chen Wang at AirLab. Email / Google Scholar / GitHub / Twitter / LinkedIn |

|

|

We live in a dynamic 4D world, constantly seeing, understanding, and interacting with objects and environments. My goal is to bridge the gap between reconstructed virtual worlds and practical robotic applications. I develop methods that help robots perceive, understand, and interact with objects and environments from sparse observations. |

|

|

|

, Jiashun Wang*, Jeff Tan, Yiwen Zhao, Jessica Hodgins, Shubham Tulsiani, Deva Ramanan* International Conference on Learning Representations (ICLR), 2026 paper / project page / code A real-to-sim framework that turns monocular RGB video into whole-body control across diverse, complex terrains. |

|

|

, Jeff Tan, Tarasha Khurana, Neehar Peri, Deva Ramanan* International Conference on Computer Vision (ICCV), 2025 Spotlight presentation at Workshop on Generating Digital Twins from Images and Videos @ ICCV paper / project page / code The first approach that enables free-view synthesis for 4D dynamic scene reconstruction under sparse-view capture. |

|

|

, Bowen Li, Chen Wang, Sebastian Scherer* IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2024 paper / project page / code Class-agnostic relations for few-shot detection without fine-tuning, enabling fast and efficient field deployment. |

|

|

Haoyang Weng, Yitang Li, Nikhil Sobanbabu, , Zhengyi Luo, Tairan He, Deva Ramanan, Guanya Shi* In submission paper / project page / code A general framework that learns whole-body humanoid-object interaction skills directly from monocular RGB videos. |

|

|

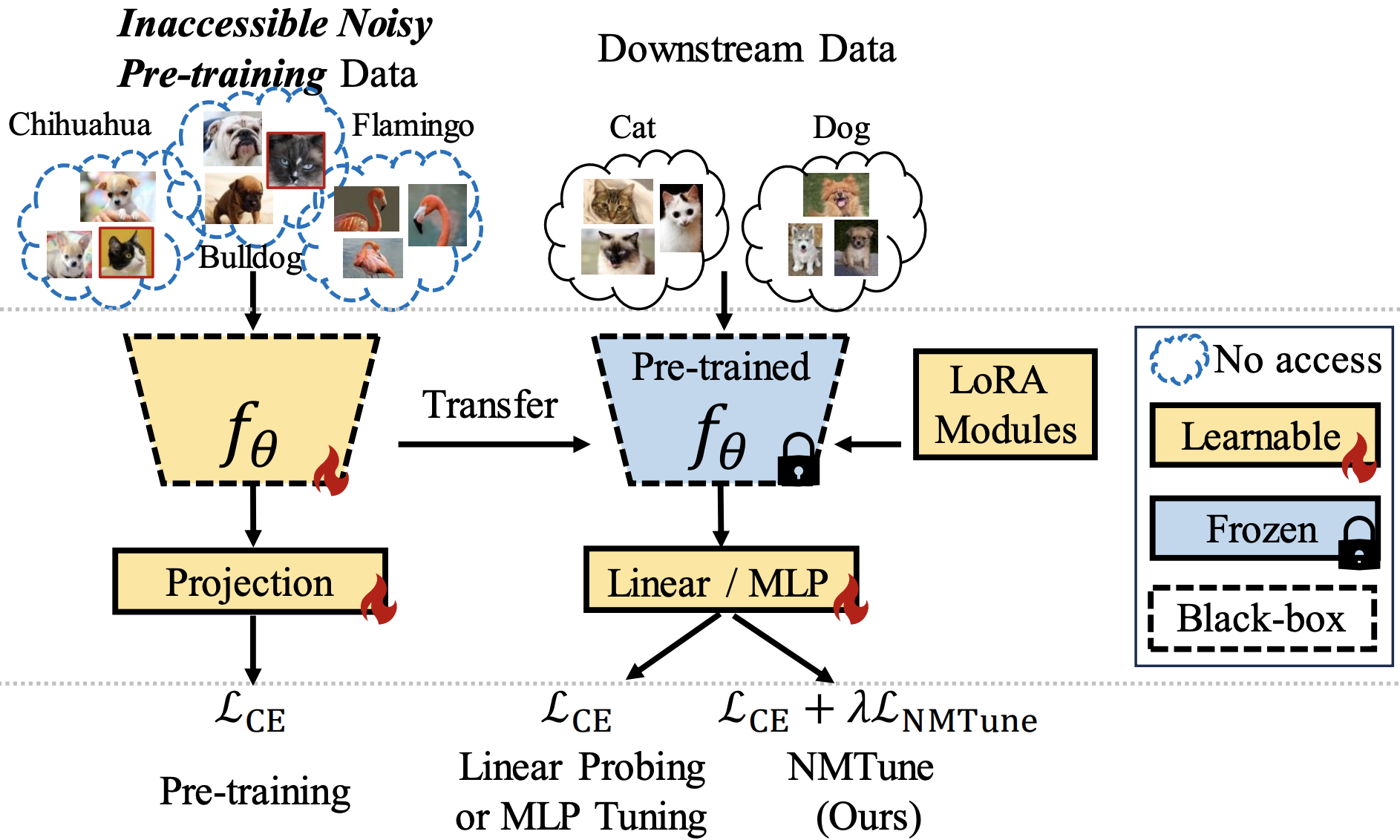

, , , , , , , IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI) paper / project page Characterizes noise in large-scale pre-training data and proposes strategies to mitigate its impact on downstream tasks. |

|

|

|

Robot Learning report Clusters motion data, trains latent variable models, and deploys a style-aware high-level controller for multi-task behaviors. |

|

|

paper / extension / project page / code Contributed physics-aware constrained optimization modules for trajectory planning and motion control. |

|

project page / code / video Developed diffusion autoencoder components for medical imaging, with a focus on MRI generation and reconstruction. |

|

|

Academic Reviewer

|

|

|

I am confident that my "boundaryless", "website-stalking" friends have contributed to at least 74.47% of the traffic here. |

|

Template adapted from Qitao Zhao (credit to Yijia Weng & Jon Barron). |